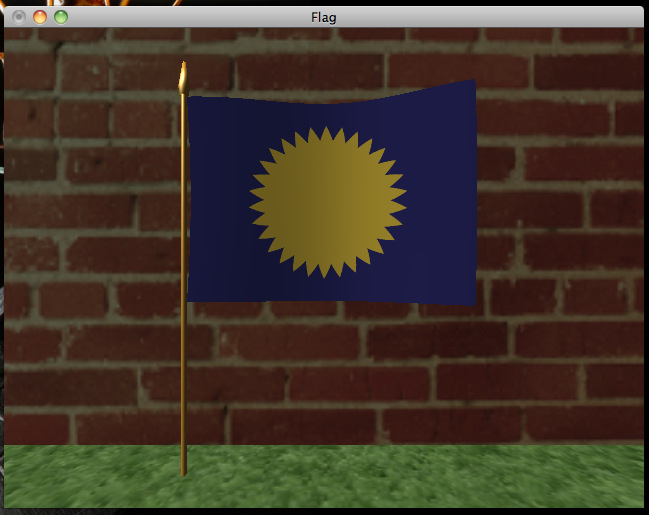

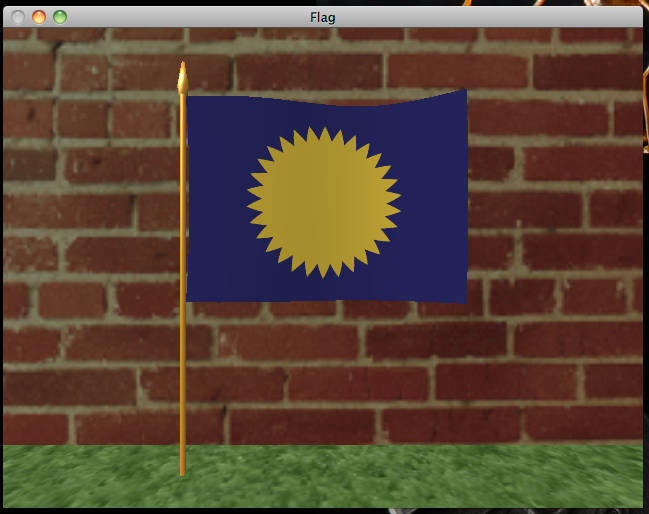

At this point, we've seen the most important core parts of the OpenGL API and gotten a decent taste of the GLSL language. Now's a good time to start exercising OpenGL and implementing some graphic effects, introducing new nuances and specialized features of OpenGL and GLSL as we go. For the next few chapters, I've prepared a new demo program you can get from my Github ch4-flag repository. The flag demo renders a waving flag on a flagpole against a simple background:

With the flat, wallpaper-looking grass and brick textures and the unnatural lack of shadow cast by the flag, it looks like something a Nintendo 64 would have rendered, but it's a start. We'll improve the graphical fidelity of the demo over the next few chapters. For this chapter, we'll render the above image by implementing the Phong shading model, which will serve as the basis for more advanced effects we'll look at later on.

Overview of the flag program

I've organized flag into four C files and four headers. You've already seen a good amount of it in hello-gl: the file-util.c and file-util.h files contain the read_tga and file_contents functions, and gl-util.c and gl-util.h contain the make_texture, make_shader, and make_program functions we wrote in chapter 2. The vec-util.h header contains some basic vector math functions. flag.c looks a lot like hello-gl.c did: in main, we initialize GLUT and GLEW, set up callbacks for GLUT events, call a make_resources function to allocate a bunch of GL resources, and call out to glutMainLoop to start running the demo. However, the setup and rendering are a bit more involved than last time. Let's look at what's new and changed:

Mesh construction

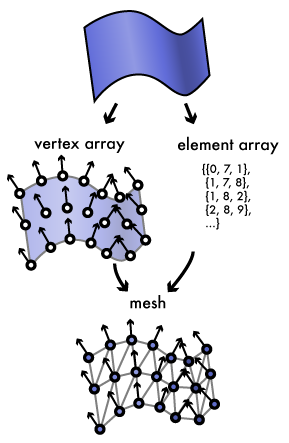

The meshes.c file contains code that generates the vertex and element arrays, collectively called a mesh, for the flag, flagpole, ground, and wall objects that we'll be rendering. Most objects in the real world, including real flagpoles and flags, have smooth curving surfaces, but graphics cards deal with triangles. To render these objects, we have to approximate their surfaces as a collection of triangles. We do this by filling a vertex array with vertices placed along its surface, storing attributes of the surface with each vertex, and connecting the samples into triangles using the element array to give an approximation of the original surface.

The fundamental properties a mesh stores for each vertex are its position in world space and its normal, a vector perpendicular to the original surface. The normal is fundamental to shading calculations, as we'll see shortly. Normals should be unit vectors, that is, vectors whose length is one. Each vertex also has material parameters that indicate how the surface is shaded. The material can consist of a set of per-vertex values, texture coordinates that sample material information from a texture, or some combination of both.

For the flag demo, the material consists of a texture coordinate for sampling the diffuse color from the mesh texture, a specular color, and shininess factor. We'll see how these parameters are used shortly. Our vertex buffer thus contains an array of flag_vertex structs looking like this:

struct flag_vertex {

GLfloat position[4];

GLfloat normal[4];

GLfloat texcoord[2];

GLfloat shininess;

GLubyte specular[4];

};

Although the position and normal are three-dimensional vectors, we pad them out to four elements because most GPUs prefer to load vector data from 128-bit-aligned buffers, like SIMD instruction sets such as SSE. For each mesh, we collect the vertex buffer, element buffer, texture object, and element count into a flag_mesh struct. When we render, we set up glVertexAttribPointers to pass all of the flag_vertex attributes to the vertex shader:

struct flag_mesh {

GLuint vertex_buffer, element_buffer;

GLsizei element_count;

GLuint texture;

};

static void render_mesh(struct flag_mesh const *mesh)

{

glBindTexture(GL_TEXTURE_2D, mesh->texture);

glBindBuffer(GL_ARRAY_BUFFER, mesh->vertex_buffer);

glVertexAttribPointer(

g_resources.flag_program.attributes.position,

3, GL_FLOAT, GL_FALSE, sizeof(struct flag_vertex),

(void*)offsetof(struct flag_vertex, position)

);

glVertexAttribPointer(

g_resources.flag_program.attributes.normal,

3, GL_FLOAT, GL_FALSE, sizeof(struct flag_vertex),

(void*)offsetof(struct flag_vertex, normal)

);

glVertexAttribPointer(

g_resources.flag_program.attributes.texcoord,

2, GL_FLOAT, GL_FALSE, sizeof(struct flag_vertex),

(void*)offsetof(struct flag_vertex, texcoord)

);

glVertexAttribPointer(

g_resources.flag_program.attributes.shininess,

1, GL_FLOAT, GL_FALSE, sizeof(struct flag_vertex),

(void*)offsetof(struct flag_vertex, shininess)

);

glVertexAttribPointer(

g_resources.flag_program.attributes.specular,

4, GL_UNSIGNED_BYTE, GL_TRUE, sizeof(struct flag_vertex),

(void*)offsetof(struct flag_vertex, specular)

);

glBindBuffer(GL_ELEMENT_ARRAY_BUFFER, mesh->element_buffer);

glDrawElements(

GL_TRIANGLES,

mesh->element_count,

GL_UNSIGNED_SHORT,

(void*)0

);

}

Note that the glVertexAttribPointer call for the specular color attribute passes GL_TRUE for the normalized argument. The specular colors are stored as four-component arrays of bytes between 0 and 255, much as they would be in a bitmap image, but with the normalized flag set, they'll be presented to the shaders as normalized floating-point values between 0.0 and 1.0.

The actual code to generate the meshes is fairly tedious, so I'll just describe it at a high level. We construct two distinct meshes: the background mesh, created by init_background_mesh, which consists of the static flagpole, ground, and wall objects; and the flag, set up by init_flag_mesh. The background mesh consists of two large rectangles for the ground and wall, and a thin cylinder with a pointed truck making the flagpole. The wall, ground, and flagpole are assigned texture coordinates to sample out of a single texture atlas image containing the grass, brick, and metal textures, stored in background.tga. This allows the entire background to be rendered in a single pass with the same active texture. The flagpole is additionally given a yellow specular color, which will give it a metallic sheen when we shade it. The flag is generated by evaluating the function calculate_flag_vertex at regular intervals between zero and one on the s and t parametric axes, generating something that looks sort of like a flag flapping in the breeze. The flag being a separate mesh makes it easy to update the mesh data as the flag animates, and lets us render it with its own texture, loaded from flag.tga.

Streaming dynamic mesh data

void update_flag_mesh(

struct flag_mesh const *mesh,

struct flag_vertex *vertex_data,

GLfloat time

) {

GLsizei s, t, i;

for (t = 0, i = 0; t < FLAG_Y_RES; ++t)

for (s = 0; s < FLAG_X_RES; ++s, ++i) {

GLfloat ss = FLAG_S_STEP * s, tt = FLAG_T_STEP * t;

calculate_flag_vertex(&vertex_data[i], ss, tt, time);

}

glBindBuffer(GL_ARRAY_BUFFER, mesh->vertex_buffer);

glBufferData(

GL_ARRAY_BUFFER,

FLAG_VERTEX_COUNT * sizeof(struct flag_vertex),

vertex_data,

GL_STREAM_DRAW

);

}

To animate the flag, we use our glutIdleFunc callback to recalculate the flag's vertices and update the contents of the vertex buffer. We update the buffer with the same glBufferData function we used to initialize it. However, both on initialization and on each update, we give the flag vertex data the GL_STREAM_DRAW hint instead of the GL_STATIC_DRAW hint we've been using until now. This tells the OpenGL driver to optimize for the fact that we'll be continuously replacing the buffer with new data. Since only the positions and normals of the vertices themselves changes, the element buffer for the flag can remain static. The connectivity of the vertices doesn't change.

Using a depth buffer to order 3D objects

Since we're drawing multiple objects in 3d space, we need to ensure that objects closer to the viewer render on top of the objects behind them. An easy way to do this would be to just render the objects back-to-front—in our case, render the background mesh first, then the flag on top of it—but this is inefficient because of the overdraw this approach leads to: fragments get generated and processed by the fragment shader for background objects, only to be immediately overwritten by the foreground objects in front of it. Back-to-front rendering also cannot render mutually overlapping objects, such as two interlocked rings, on its own, for rendering either object first will cause it to entirely overlap the other.

Graphics cards use depth buffers to provide efficient and reliable ordering of 3d objects. A depth buffer is a part of the framebuffer that sits alongside the color buffer, and like the color buffer, is a two-dimensional array of pixel values. Instead of color values, the depth buffer stores a depth value, associating a projection-space z coordinate to each pixel. When a triangle is rasterized with depth testing enabled, each fragment's projected z value is compared to the z value currently stored in the depth buffer. If the fragment would be further away from the viewer than the current depth buffer value, the fragment is discarded. Otherwise, the fragment gets rendered to the color and depth buffers, the new z value replacing the old depth buffer value.

In addition to providing correct ordering of objects, depth buffering also minimizes the cost of overdraw if you render objects front-to-back. Although the rasterizer will still generate fragments for parts of objects obscured by already-rendered objects, modern GPUs can discard these obscured fragments before they get run through the fragment shader, reducing the number of overall fragment shader invocations the processor needs to execute. Since our flag mesh appears in front of the background mesh, we thus render the flag before the background so that the obscured parts of the background don't need to be shaded.

To use depth testing in our program, we need to ask for a depth buffer in our framebuffer and then enable depth testing in the OpenGL state. With GLUT, we can ask for a depth buffer for a window by passing the GLUT_DEPTH flag to glutInitDisplayMode:

int main(int argc, char* argv[])

{

glutInit(&argc, argv);

glutInitDisplayMode(GLUT_RGB | GLUT_DEPTH | GLUT_DOUBLE);

/* ... */

}

We enable and disable depth testing by calling glEnable or glDisable with GL_DEPTH_TEST:

static void init_gl_state(void)

{

/* ... */

glEnable(GL_DEPTH_TEST);

/* ... */

}

When we start rendering our scene, we need to clear the depth buffer along with the color buffer to ensure that stale depth values don't affect rendering. We can clear both buffers with a single glClear call by passing it both GL_COLOR_BUFFER_BIT and GL_DEPTH_BUFFER_BIT:

static void render(void)

{

glClear(GL_COLOR_BUFFER_BIT | GL_DEPTH_BUFFER_BIT);

/* ... */

}

Back-face culling

Another potential source of overdraw comes from within an object. If you look at the cylindrical flagpole from any direction, you're going to see at most half of its surface. The front-facing triangles appear in front of the back-facing triangles, but they rasterize into the same pixels on screen. Depending on the ordering of triangles in the mesh, the front-facing triangles will either overdraw the back-facing triangles or the fragments of the back-facing triangles will fail the depth test, requiring some extra work from the GPU in either case.

However, we can get the GPU to cheaply and quickly discard back-facing triangles even before they get rasterized or depth-tested. If we enable back-face culling, the graphics card will classify every triangle as front- or back-facing after running the vertex shader and immediately prior to rasterization, completely discarding back-facing triangles. It does this by looking at the winding of each triangle in projection space. By default, triangles winding counterclockwise are considered front-facing. This works because transforming a triangle to face the opposite direction from the viewer reverses its winding. By constructing our meshes so that all of the triangles wind counterclockwise when viewed from the front, we can use back-face culling to eliminate most of the work of rasterizing those triangles when they face away from the viewer. Only the vertex shader will need to run for their vertices.

Back-face culling is enabled and disabled by passing GL_CULL_FACE to glEnable/glDisable:

static void init_gl_state(void)

{

/* ... */

glEnable(GL_CULL_FACE);

/* ... */

}

Updating the projection matrix and viewport

If you go back a chapter and try resizing the hello-gl window, you'll notice that the image stretches to fit the new size of the window, ruining the aspect ratio we worked so hard to preserve. In order to maintain an accurate aspect ratio, we have to recalculate our projection matrix when the window size changes, taking the new aspect ratio into account. We also have to inform OpenGL of the new viewport size by calling glViewport. GLUT allows us to provide a callback that gets invoked when the window is resized using glutReshapeFunc:

static void reshape(int w, int h)

{

g_resources.window_size[0] = w;

g_resources.window_size[1] = h;

update_p_matrix(g_resources.p_matrix, w, h);

glViewport(0, 0, w, h);

}

int main(int argc, char* argv[])

{

/* ... */

glutReshapeFunc(&reshape);

/* ... */

}

The update_p_matrix function implements the perspective matrix formula from last chapter and stores the new projection matrix in the g_resources.p_matrix array, from which we'll feed our shaders' p_matrix uniform variable.

Handling mouse and keyboard input with GLUT

GLUT provides extremely primitive support for mouse and keyboard input. In flag, I've made it so that dragging the mouse moves the view around, and the view snaps back to its original position when the mouse button is released. GLUT offers a glutMotionFunc callback that gets called when the mouse moves while a button is held down and a glutMouseFunc that gets called when a mouse button is pressed or released. (There's also glutPassiveMotionFunc to handle mouse motion when a button isn't pressed, which we don't use.) Our glutMotionFunc adjusts the model-view matrix relative to the distance from the center of the window, and our glutMouseFunc resets it when the mouse button is let go:

static void drag(int x, int y)

{

float w = (float)g_resources.window_size[0];

float h = (float)g_resources.window_size[1];

g_resources.eye_offset[0] = (float)x/w - 0.5f;

g_resources.eye_offset[1] = -(float)y/h + 0.5f;

update_mv_matrix(g_resources.mv_matrix, g_resources.eye_offset);

}

static void mouse(int button, int state, int x, int y)

{

if (button == GLUT_LEFT_BUTTON && state == GLUT_UP) {

g_resources.eye_offset[0] = 0.0f;

g_resources.eye_offset[1] = 0.0f;

update_mv_matrix(g_resources.mv_matrix, g_resources.eye_offset);

}

}

int main(int argc, char* argv[])

{

/* ... */

glutMotionFunc(&drag);

glutMouseFunc(&mouse);

/* ... */

}

The update_mv_matrix function is similar to update_p_matrix. It generates a translation matrix, following the formula from last chapter, and stores it to g_resources.mv_matrix, from which we feed the shaders' mv_matrix uniform variable.

I also rigged flag so you can reload the GLSL program from disk while the demo is running by pressing the R key. The glutKeyboardFunc callback gets called when a key is pressed. Our callback checks if the pressed key was R, and if so, calls update_flag_program:

static void keyboard(unsigned char key, int x, int y)

{

if (key == 'r' || key == 'R') {

update_flag_program();

}

}

int main(int argc, char* argv[])

{

/* ... */

glutKeyboardFunc(&keyboard);

/* ... */

}

update_flag_program attempts to load, compile, and link the flag.v.glsl and flag.f.glsl files from disk, and if successful, replaces the old shader and program objects.

That covers the C code for the flag demo. The actual shading happens inside the GLSL code, which we'll look at next.

Phong shading

Physically accurate light simulation requires expensive algorithms that have only recently become possible for even high-end computer clusters to calculate in real time. Fortunately, human eyes don't require perfect physical accuracy, especially not for fast-moving animated graphics, and real-time computer graphics has come a long way rendering impressive graphics on typical consumer hardware using cheap tricks that approximate the behavior of light without simulating it perfectly. The most fundamental of these tricks is the Phong shading model, an inexpensive approximation of how light interacts with simple materials developed by computer graphics pioneer Bui Tuong Phong in the early 1970s. Phong shading is a local illumination simulation—it only considers the direct interaction between a light source and a single point. Because of this, Phong shading alone cannot calculate effects that involve the influence of other objects in a scene, such as shadows and mirror reflections. This is why the flag casts no shadow on the ground or wall behind it.

The Phong model involves three different lighting terms:

- ambient reflection, a constant term that simulates the background level of light;

- diffuse reflection, which gives the material what we usually think of as its color;

- and specular reflection, the shine of polished or metallic surfaces.

Diffuse and ambient reflection

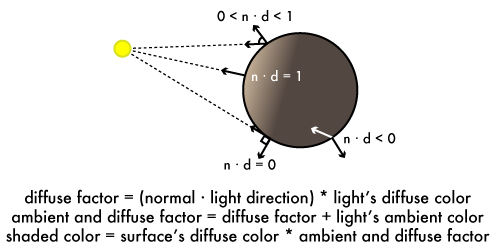

If you hold a flat sheet of paper up to a lamp in a dark room, it will appear brightest when it faces the lamp head-on, and appear dimmer as you rotate it away from the light, reaching its darkest when it's perpendicular to the light. Curved surfaces behave the same way; if you roll up or crumple the paper, its surface will be brightest where it faces the light the most directly. The wider the angle between the surface normal and the light direction, the darker the paper appears. If the paper and light remain stationary but you move your head, the paper's apparent color and brightness won't change. Likewise, in the flag demo, if you drag the view with the mouse, you can see the flag's shading remains the same. The surface reflects light evenly in every direction, or "diffusely." This basic lighting effect is thus called diffuse reflection.

There's an inexpensive operation called the dot product that produces a scalar value from two vectors related to the angle between them. Given two unit vectors u and v, if their dot product u · v (pronounced "u dot v") is one, then the vectors face the exact same direction; if zero, they're perpendicular; and if negative one, they face exact opposite directions. Positive dot products indicate acute angles while negative dot products indicate obtuse angles. GLSL provides a function dot(u,v) to calculate the dot product of two same-sized vec values.

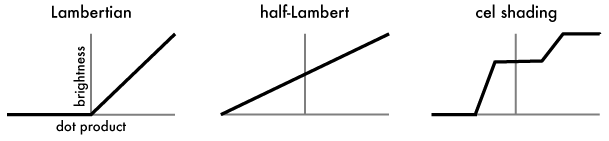

The dot product's behavior follows that of diffuse reflection: surfaces reflect more light the more parallel to a light source they become, or in other words, the closer the dot product of their normal and the light's direction gets to one. Perpendicular or back-facing surfaces reflect no light, and their dot product will be zero or negative. This relationship between the dot product and diffuse brightness was first observed by 18th-century physicist Johann Lambert and is referred to as Lambertian reflectance, and surfaces that exhibit the behavior are called Lambertian surfaces. Phong shading uses Lambertian reflectance to model diffuse reflection, taking the dot product of the surface normal and the direction from the surface to the light source. If the dot product is greater than zero, it is multiplied by the diffuse color of the light, and the result is multiplied with the surface diffuse color to get the shaded result. (Multiplying two color values involves multiplying their corresponding red, green, blue, and alpha components together, which is what GLSL's * operator does when given two vec4s.) If the dot product is zero or negative, the diffuse color will be zero.

However, in the real world, even when a surface isn't directly lit, it still won't appear pitch black. In any enclosed area, there will be a certain amount of ambient reflection bouncing around, dimly illuminating areas that the light sources don't directly hit. The Phong model simulates the ambient effect by assigning light sources a constant ambient color. This ambient color gets added to the light's diffuse color after it's been multiplied by the dot product. The sum of ambient and diffuse effect colors is then multiplied by the surface's diffuse color to give the shaded result.

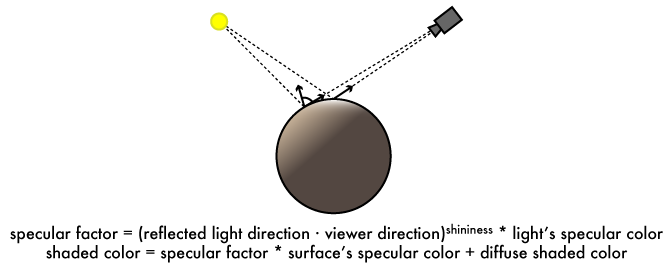

Specular reflection

Not all surfaces reflect light uniformly; many materials, including metals, glass, hair, and skin, have a reflective sheen. Unlike with diffuse reflection, if the viewer moves while a light source and shiny object remain stationary, the shine will move along the surface with the viewer. You can see this simulated in the flag demo by looking at the flagpole: as you drag the view up and down, the gold sheen moves along the pole with you. Physically, an object appears shiny when its surface is covered in highly reflective microfacets. These facets face every direction, creating a bright shiny spot where the light source reflects directly toward the viewer. This effect is called specular reflection.

The specular effect is caused by reflection from the light source to the viewer, so Phong shading simulates the specular effect by reflecting the light direction around the surface normal to give a reflection direction. We can then take the dot product of the reflection direction and the direction from the surface to the viewer. Microfacets on a specular surface follow a normal distribution: a plurality of facets lie parallel to the surface, and there is an exponential dropoff in the number of facets at steeper angles from the surface. The dropoff is sharper for more polished surfaces, giving a smaller, tighter specular highlight. Phong shading approximates this distribution by raising the dot product to an exponent called the shininess factor, with higher shininess giving a more polished shine and lower factors giving a more diffuse sheen. This final specular factor is then multiplied by the specular colors of the light source and surface, and the result added to the diffuse and ambient colors to give the final color. Non-specular surfaces have a transparent specular color with red, green, blue, and alpha components set to zero, which eliminates the specular term from the shading equation.

Implementing Phong shading in GLSL

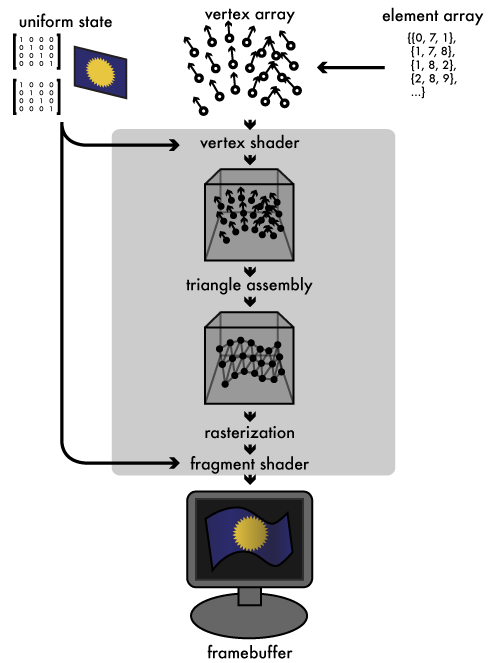

Shading calculations are usually performed in the vertex and fragment shaders, where they can leverage the GPU's parallel processing power. (This is where the term "shader" for GPU programs comes from.) Let's bring back the graphics pipeline diagram to get an overview of the Phong shading dataflow:

For the best accuracy, we perform shading at a per-fragment level. (For better performance, shading can also be done in the vertex shader and the results interpolated between vertices, but this will lead to less accurate shading, especially for specular effects.) The vertex shader, flag.v.glsl, thus only performs transformation and projection, using the p_matrix and mv_matrix we pass in as uniforms. The shader forwards most of the material vertex attributes to varying variables for the fragment shader to use:

#version 110

uniform mat4 p_matrix, mv_matrix;

uniform sampler2D texture;

attribute vec3 position, normal;

attribute vec2 texcoord;

attribute float shininess;

attribute vec4 specular;

varying vec3 frag_position, frag_normal;

varying vec2 frag_texcoord;

varying float frag_shininess;

varying vec4 frag_specular;

void main()

{

vec4 eye_position = mv_matrix * vec4(position, 1.0);

gl_Position = p_matrix * eye_position;

frag_position = eye_position.xyz;

frag_normal = (mv_matrix * vec4(normal, 0.0)).xyz;

frag_texcoord = texcoord;

frag_shininess = shininess;

frag_specular = specular;

}

In addition to the texture coordinate, shininess, and specular color, the vertex shader also outputs to the fragment shader the model-view-transformed vertex position. The model-view matrix transforms the coordinate space so that the viewer is at the origin, so we can determine the surface-to-viewer direction needed by the specular calculation from ths transformed position. We likewise transform the normal vector to keep it in the same frame of reference as the position. Since the normal is a directional vector without a position, we apply the matrix to it with a w component of zero, which cancels out the translation of the modelview matrix and only applies its rotation. With this set of varying values, the fragment shader, flag.f.glsl, can perform the actual Phong calculation:

#version 110

uniform mat4 p_matrix, mv_matrix;

uniform sampler2D texture;

varying vec3 frag_position, frag_normal;

varying vec2 frag_texcoord;

varying float frag_shininess;

varying vec4 frag_specular;

const vec3 light_direction = vec3(0.408248, -0.816497, 0.408248);

const vec4 light_diffuse = vec4(0.8, 0.8, 0.8, 0.0);

const vec4 light_ambient = vec4(0.2, 0.2, 0.2, 1.0);

const vec4 light_specular = vec4(1.0, 1.0, 1.0, 1.0);

void main()

{

vec3 mv_light_direction = (mv_matrix * vec4(light_direction, 0.0)).xyz,

normal = normalize(frag_normal),

eye = normalize(frag_position),

reflection = reflect(mv_light_direction, normal);

vec4 frag_diffuse = texture2D(texture, frag_texcoord);

vec4 diffuse_factor

= max(-dot(normal, mv_light_direction), 0.0) * light_diffuse;

vec4 ambient_diffuse_factor

= diffuse_factor + light_ambient;

vec4 specular_factor

= max(pow(-dot(reflection, eye), frag_shininess), 0.0)

* light_specular;

gl_FragColor = specular_factor * frag_specular

+ ambient_diffuse_factor * frag_diffuse;

}

To keep things simple, the shader defines a single light source using const values in the shader source. A real renderer would likely feed these light parameters in as uniform values, so that lights can be moved or their material attributes changed from the host program. With the light attributes embedded in the GLSL as constants, it's easy to change the light attributes in the source, press R to reload the shader, and see the result. Our light source acts as if it were infinitely far away, shining from the same light_direction on every surface in the scene. The light is white, with a 20% baseline ambient light level. It can be made colored by replacing light_diffuse, light_ambient, light_specular with RGBA values.

The fragment shader uses several new GLSL functions we haven't seen before:

- normalize(v) returns a unit vector with the same direction as v. We use it here to convert the fragment position into a direction vector, and to ensure that the normal is a unit vector. Even if our original vertex normals are all unit vectors, their linear interpolations won't be.

- pow(x,n) raises x to the nth power, which we use to apply the specular shininess factor.

- max(n,m) returns the larger of n or m. We use it to clamp dot products less than zero so they shade the same as if they were zero.

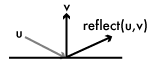

reflect(u,v) reflects the vector u around v, giving a vector that makes the same angle with v as u, but in the opposite direction. With it we derive the reflected eye direction for the specular calculation.

reflect(u,v) reflects the vector u around v, giving a vector that makes the same angle with v as u, but in the opposite direction. With it we derive the reflected eye direction for the specular calculation.

We transform our constant light_direction to put it in the same coordinate space as the normal and eye vectors. We then sample the surface's diffuse color from the mesh texture We assign the shaded value to gl_FragColor to generate the final shaded fragment.

Tweaking the Phong model for stylistic effects

Before we wrap things up, let's take a quick look at how the Phong framework can be manipulated to give more stylized results. The classic Phong model is a photorealistic model: it attempts to model real-world light behavior. But photorealism isn't always desirable. Many games set themselves apart visually by using more stylized shading effects. These effects often use the basic Phong model of diffuse, ambient, and specular lighting, but they warp the individual factors before summing them together.

As a trivial example, we can get a brighter, softer shading effect if, instead of clamping the diffuse dot product of back-facing surfaces to zero, we scale it so that perpendicular surfaces receive half illumination, and back-facing surfaces scale linearly toward zero. Team Fortress 2 uses this "half Lambert" reflectance scale, so called because the standard Lambertian dropoff rate is halved, as a basis for its cartoonish but semi-photorealistic look (albeit heavily modified). Let's modify flag.f.glsl to warp the diffuse dot product:

float warp_diffuse(float d)

{

return d * 0.5 + 0.5;

}

void main()

{

// ...

vec4 diffuse_factor

= max(warp_diffuse(-dot(normal, mv_light_direction)), 0.0) * light_diffuse;

// ...

}

A popular effect that builds from this half-Lambert scale is cel shading, in which a stair-step function is applied to the half-Lambert factor so that surfaces are shaded flatly with higher contrast between light and dark areas, in the style of traditional hand-drawn animation cels. Jet Set Radio pioneered this look, and it's since been used in countless games. Implementing it in GLSL is easy:

float cel(float d)

{

return smoothstep(0.35, 0.37, d) * 0.4 + smoothstep(0.70, 0.72, d) * 0.6;

}

float warp_diffuse(float d)

{

return cel(d * 0.5 + 0.5);

}

GLSL's smoothstep(lo,hi,x) function behaves like this: if x is less than lo, it returns 0.0; if greater than hi, it returns 1.0; if in between, it transitions linearly from zero to one. Our cel function above uses smoothstep to create three flat shading levels with short linear transitions in between.

There are other effects that can be performed by messing with the warp_diffuse function. For example, the function doesn't need to be float-to-float but could also map to a color scale; you could map greater dot products to warmer reddish colors while lesser products map to cooler bluish colors to give an artistic illustration effect. I encourage you to experiment with the fragment shader code to see what other effects you can create.

Conclusion

With Phong shading implemented, we can start adding additional effects to further improve the look of the flag scene. The most glaring problem is the lack of shadow cast by the flag, so next chapter we'll look at shadow mapping, a technique for rendering accurate shadows into a scene, and learn about off-screen framebuffer objects in the process. Meanwhile, if you're interested in learning more about real-time shading techniques on your own without an OpenGL bias, I highly recommend the book Real-Time Rendering.